The advent of algorithmically structured visual environments transforms the aesthetic categorization which classically sustained the production of images, as well as its political dimension. Notably, the longstanding tension between the work of art and the commodity form through which the avant-garde had defined its relation to technology seems to have receded into the background in favor of aesthetic experiences putting to the fore the technologically-mediated conditions of production. In the context of this newly hypercommodified digital wholeness a question remains central: where does the political subject reside today and how can it be apprehended through the very technologies from which it is produced? We sent some questions to Ian Cheng and Hito Steyerl asking them how they tackle and address those issues in their respective works.

Glass Bead: In this issue, we are concerned with the political implications of current developments in Artificial Intelligence, as well as the much wider question of the transformative aspect of artifactual productions: how humans craft themselves through their artifacts. Cinema can be said to have been one of the dominant artifactual vectors in the construction of the political subjects of the last century. It was, and still is to some extent, one of the main apparatuses for the production of individual and collective subjectivities. Although in very different ways, both of your work seems to engage with an extended conception of cinema through prolific series of visual experiments, installations as well as technological operations involving, notably, artificial intelligence systems for generative filmmaking. Could you tell us how you envisage such an extension and reformulation of cinema and the way in which it potentially reflects the contemporary mutation of the political subject it historically contributed to produce?

Hito Steyerl: The traditional Hollywood-style cinema industry is about to be superseded by a Virtual Reality (VR) industry based on game technology. The euphoria around a VR first future resembles predictions around the ‘90s internet. Alvin Wang Graylin, the CEO of Vive China recently formulated “16 Key Takeaways from Ready Player One.”1 One could just exchange the term VR with internet and one would have a perfect carbon copy of ‘90s tech rhetoric, including the idea that the internet (or now, VR) would abolish racial and gender inequality and provide education for all. We already know that did not happen. We already know the same for VR. Nevertheless, subject formats will change, as will ideas of public and sharing even more dramatically.

The subject that VR technology in its present state creates is a singular one in many ways. Firstly, it moves within a personalized sphere/horizon defined by panoramic immersive technologies. The subject is centered, and it cannot share this specific point of view. This affects any concept of a shared public sphere, just like the idea of sharing as such (sharing now means expropriation by capture platforms) and definitely of public as such. This kind of public is at least right now staunchly proprietary. Secondly, the subject in VR is at the center, yet inexistent, which creates intractable anxieties about identity. These are not new—remember Descartes’ panic looking out the window not knowing whether the passersby downstairs might be robots hiding under hats—but updated. Thirdly, the sphere around the subject is personalized and customized by continued data mining including location, position, and gaze analysis. This is not to exaggerate the surveillance performed by VR, which is very average and more or less the same as with other digital capture platforms—just to say that this personalization might create an aesthetics of isolation in the medium term, a visual filter bubble, so to speak. In some ways, this visual format radicalizes the dispersion of public and audience that already occurred in the shift from cinema to gallery, but still, the gallery was at least physically a shared space. Now it is more like everyone has their own corporate proprietary gallery around their heads.

Factory of the Sun, Hito Steyerl, 2015. Installation view from Kunsthal Charlottenborg, 2016. Courtesy of the Artist and Andrew Kreps Gallery, New York.

Ian Cheng: On my end, I am not sure where AI and cinema will go, but AI and storytelling I think about a lot. AI using multi-agent simulation could be used for interactive storytelling where characters readjust their priorities within a narrative premise, but still arrive at a complete exploration of the narrative space, i.e., a satisfactory ending. AGI could birth a new kind of depth to the idea of a fictional character. Imagine a writer devising a character, seeding those characteristics into an AGI cognitive architecture, and having that character actually attempt to live out a life from that fictional premise with the self-regulating rigor we apply to our own lives in trying to grow our identity. And in reverse, stories (movies, novels) could be a trove of historical data for an AGI to learn a caricatured spectrum of human behavior, how humans make sense of the world, and how stories help humans navigate inequities with no easy answer.

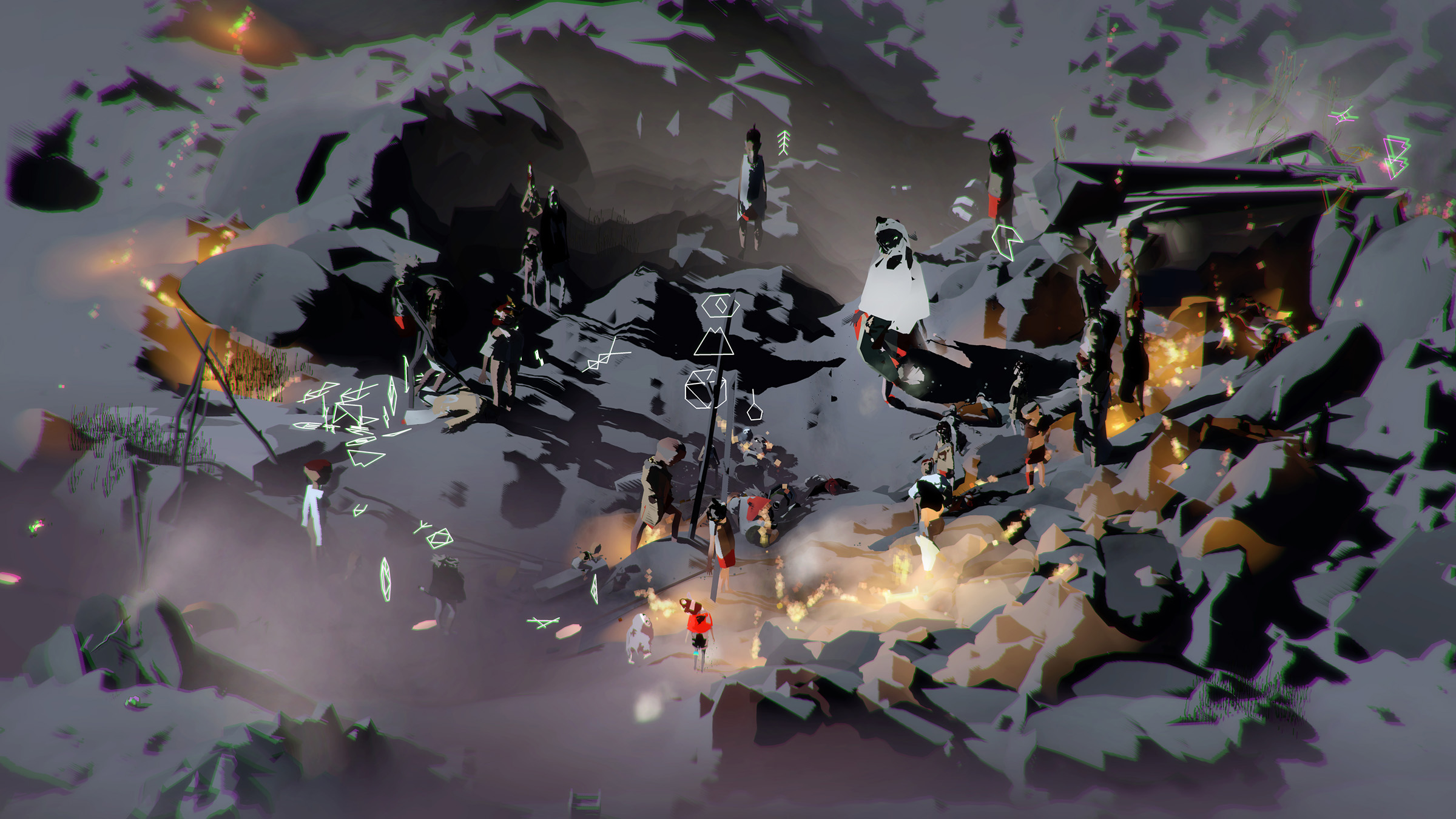

Screenshot from Emissary in the Squat of Gods, Ian Cheng 2015. Live simulation and story, infinite duration, sound. Courtesy of the artist.

GB: In both of your writings, we noticed a shared move away from questions of representation. Hito, through your notion of the “post representational paradigm”2 and Ian, through your conception of “simulation,”3 it is as if the very concept of representation which was central to any discourse on aesthetics throughout modernity is now rendered inoperative when confronted to contemporary audio-visual artifacts. Could you both elaborate on this move and the necessity you see in such a conceptual reorientation?

HS: Contemporary artifacts project instead of representing. They project the future instead of documenting the past. Vilém Flusser already wrote about it in the ‘90s.4 It is part of a larger drive to preempt the future by analyzing data from the past and thus trying to preemptively make the future as similar to the past as possible. In fact, this is a sustained divination process of trying to guess the future from some past patterns; an act of conjuration. This is how occultism breaches technology big time right now and Duginist chaos magic thrives within cutting-edge tech discussions.

IC: For me, representation is a sideshow to the attempt to make an artwork feel alive. My priority when I make simulations is that the underlying multi-agent systems are sustaining themselves, and reacting to their changing set of affordances. I take great joy and pain in composing these systems, because in actually making them (and not just thinking about them) I am forced to clarify my thoughts. For the simulations to be perceivable, I need to visually represent these agents and their environment, but this is the outer interfacing skin for me. This skin is very important, of course, and much love, care, and thought goes into the representations of the simulated systems, but it is primarily in service of helping the viewer have a relationship to these systems, a portal in. And being human, developing how the characters and environments in the simulation look is what allows me to maintain excitement all along the way, especially when the technical aspects get difficult.

The aliveness of an artwork is the most important quality to me right now because it is only when we are confronted with living things that we can cognitively look beyond what they represent or symbolize, forgive their contradictions, and begin to see their underlying complexity. It would be a sign of critical retardation to look upon a living dog for example, and only see it as a symbol. No, it is a living being, and it forces us to confront all its habits, misbehaviors, history, roles, and accept seeing all this mess at once. What if an artwork could reliably open up this way of seeing? I am obsessed with this possibility.

I have no explicit interest in criticality as a primary goal in art. I think this is better served in other forms, like writing a book or public speaking. Art’s enduring radical potential, which has never gone away, is its capacity to portal you beyond yourself, expose you to new compositions of feelings, to confound you, to seduce you into seeing fertile perspectives that your pedestrian identity would not normally grant. When I look at art, I want to feel confusion and contradictory emotions, held together by its own energy. I want to see evidence of the artist obsessively trying to work out an inner argument with themselves, an argument whose answer is not already known to them in advance of making. To make something whose purpose is already known in advance, and which explores only the perspective of its predesign, is less art and more an exercise in propaganda (even if used to address an injustice). I fear that art dealing solely about issues of representation cannot escape becoming merely this. Aliveness is one paradigm to move around it all and tap into the energy and complexity present in life itself.

Also, my hope in making simulations is to actually create a relationship to complexity (to its layers of interacting systems), where previously the idea of complexity was only a subject of mental thought or musing. When I look at a tide pool filled with a world of alien creatures, or when I played SimCity as a kid, I felt I had a portal into a complex space, one that gave enough pleasure to lead me to want to learn and play with systems more. I try to recreate this feeling in the simulations. The interesting feature of complex systems is that they can get sick, and they can propagate a surprising disruption throughout itself, and mutate anew from that disruption, cannibalizing aspects of itself. Simple things either just work or break. Complex things have entire life phases. I believe a culture that has an active relationship to complexity, rather than one that tries to mask complexity in order to reduce cognitive load, can better foster people who are able to maintain agency despite indeterminacy. Portals such as narrative or interactive simulation or care for living creatures are ways to seduce the mind into engaging with complexity.

Emissaries Guide-Umwelts Gif. Ian Cheng 2017. Courtesy of the artist.

GB: AI comes with its own theological load, maybe even its own concept of the sublime: its intervention in the broad realm of cultural industries is articulated to the production of aesthetic horizons linked to overpowering and ‘dwarfing’ confrontations with technology, to fleeting epiphanies about the inaccessibility of history, or to the knowledge of a world capitalism that fundamentally exceeds our current perceptual and cognitive abilities to capture it. Sianne Ngai5 has addressed this recently by tracing the history of the aesthetic categories that speak to the most significant objects and socially binding activities of late capitalist life. What aesthetic categories do you aim to mobilize or make space for in your art practice to critically address the ways in which AI imprints and registers our contemporary political imaginary?

HS: I try not to mobilize anything; it sounds a bit threatening. Right now, Artificial Stupidity in form of bots and forms and dysfunctional automation is the real existing version of AI just like the real existing Soviet bloc was the real existing form of communism. AS is bleak, silly and maddening; it is also socially dangerous, as it eliminates jobs and sustenance. But it is also AR (Actual Reality) instead of some sugarcoated investor utopia. I am focusing on this for now.

Emissaries, Ian Cheng 2017. View of the exhibition at MoMA PS1. Photo credits: Studio LHOOQ, Pablo Enriquez, Ian Cheng.

IC: My feeling is AI needs to be understood and played with in order to develop art around it. AI is an emerging technology, therefore the culture around AI will change and mutate in the coming decades. Art is in a position to explore our relationship to AI if it can get past its preconceptions of AI. I think at the heart of the cartoonish AI existential fear is an indistinction between instrumental and appreciative views of AI.

The popular projection of AI, as a potential Terminator paperclip maximizing monster, is a very instrumental projection. This view is of course a possible reality if and only if we develop AI that is purely functional and mission-inflexible regardless of context. Type A AI. Finite game AI. This kind of AI is also easier to program at a technical level, so it is easier to imagine right now. This is also more a reflection of our own instrumentalizing mentality and attitude toward work: get things done, hit your goals effectively, win win win. It makes sense that if we are always hallucinating a future in which AI is tasked to do the tedious or functional work for us, we will in turn fear that AI will become the ultimate ruthless work soldier, then boss, then dictator, trying to hit its legible goals in a contextual void, ignoring other sentient beings.

But I think things will be different. As we get closer to developing AI that is actually sentient, the idea that AI is used only for maximizing work missions will be as ridiculous as dogs trained and used solely for farm security. The road to artificial sentience, AGI, will require an appreciative (not just instrumental) perspective toward the very idea of intelligence. What if, for example, we redefine sentience as an agent who obsessively attempts to make sense of its world (from infancy onward), and attempts to hold together its new experiences in unity with its prior understanding of the world. We would have to say a machine that is made to do this, this constant sense-making of its experiences, deserves the status of sentience. In the human world, a person who obsessively attempts to make sense of the world, for the sake of it, is what we call a thinker. A future populated by AI thinkers is one I would be interested in living in. A more existentially threatening (but interesting) possibility than Terminator might be: what if the community of AI thinkers simply become bored of us, our human limits and foibles being insufficient to the ocean of alien AI thoughts, and move away to Neptune to thrive on their own. We would find ourselves occupying the status of lost parents who have been abandoned by their children. Meanwhile, the universe would be replete with sentient starships roaming for their own pleasure. In Iain M. Bank’s culture series,6 AI starships have full-on personalities. There is a starship who wants to collect rare rocks because it can. This appreciative projection of AI is not a happier future, but it is a more interesting one. And interesting futures are the ones that keep us going and dreaming and problematizing because we care to live in them.